Announcing Aerospike 4.7 – the First Commercial Database to Support the Intel® Ethernet 800 Series with ADQ

Aerospike’s industry leading performance increased more than 75% when leveraging ADQ!

Today, Aerospike announced the availability of Aerospike 4.7 that features the following product enhancements:

Support for the Intel® Ethernet 800 Series with Application Device Queues (ADQ)

New features released to further optimize database operations

1. Support for the Intel® Ethernet 800 Series with Application Device Queues (ADQ)

The Aerospike 4.7 Enterprise Edition release continues to drive innovation by becoming the first commercial database to support the Intel® Ethernet 800 Series with Application Device Queues (ADQ). The 800 Series is a family of PCIe cards and controllers supporting up to 100 Gigabit Ethernet. While delivering a massive increase in bandwidth, the 800 Series incorporates innovations to maximize throughput and minimize latency through the use of ADQ.

NICs are meant to separate related network packets into separate device queues to make processing more efficient by keeping high-priority traffic from getting stuck behind slow requests. ADQ sets the bar higher by letting applications define tailored rules (Traffic Classes) for sorting packets into device queues. The 800 Series NICs directly sorts packets using these rules, completely offloading the host processor.

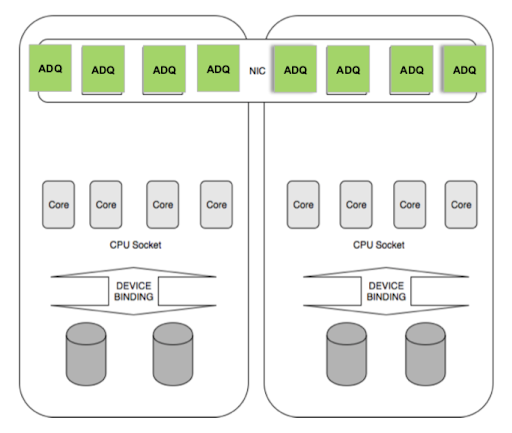

The Aerospike workload involves many clients sending database requests to the Aerospike server. These are dispatched in parallel to many service threads. All requests are treated equally, so the ADQ strategy is to spread network traffic equally to all the CPU cores in the system. We have defined one device queue for each CPU core in the system and configured each queue to generate service interrupts on a particular CPU. The Traffic Classes also route all requests from a given client to the same device queue, as shown below.

Aligning device queues and CPU cores reduces context switching overhead. Better still, it keeps data in local processor caches. Together these factors contribute to lower latency, increased response time predictability and higher throughput. Busy polling was also employed to increase performance to reduce interrupts by servicing all packets that have accumulated. A 50 millisecond polling interval was found to give optimal results because every millisecond matters.

Only a few updates were required to allow the Aerospike server codebase to support ADQ. It turns out the server was already structured to tie network processing to particular threads. This is an essential part of the existing auto-pinning mechanism. Multiple Aerospike instances running on multi-socket Non-Uniform Memory Access (NUMA) to achieve higher performance by servicing a particular NUMA domain. A new “auto-pin ADQ” configuration option that ties server transaction threads to the CPU core servicing the device queue via the driver-assigned NAPI ID.

Aerospike with ADQ performance test resultsADQ performance was measured in a test environment comprising one dual-socket, dual-NIC server and six clients, all connected to a 100G switch. Performance data were gathered by running 18 instances of the Aerospike C benchmark (3 per client) against the server. Relative performance was measured by comparing ADQ NUMA pinning with the baseline case of CPU pinning.

> Detailed information on the test environment can be found here. Below are the highlights:

Intel® Xeon® Platinum 8280 Server (2.7 GHz, 28 cores)

768 GB total DRAM

2 Intel® Ethernet 800 Series 100G NIC cards

2 Aerospike 4.7 instances (NUMA configuration with CPU and ADQ pinning)

Intel® Xeon® E5-2699 v4 clients (2.2 GHz, 22 cores)

125 GB total DRAM

Single Intel® Ethernet 700 Series 40G NIC card

3 instances of Aerospike C benchmark (async mode) per client

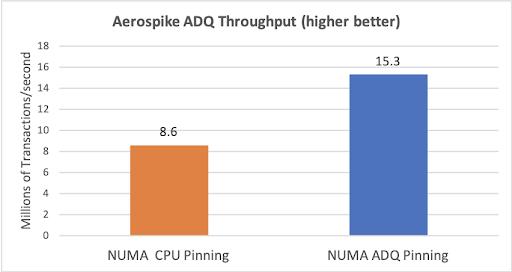

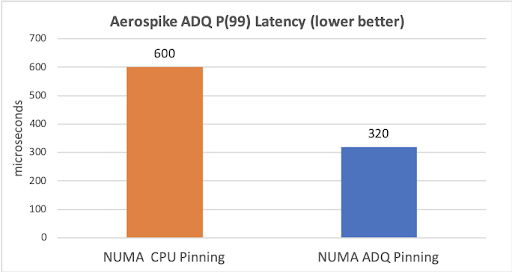

Test results are summarized below, and explained fully in the upcoming ADQ Technical Overview.When enabling ADQ the performance was recorded at 15.3M transactions/sec (>75% improvement). In regards to latency, 99% of the requests were below 320 µsecs (>45% improvement in response time predictability).

2. New features released to further optimize database operations

Filtering for All Operations – predicate filter support for batch, read, write, delete and record UDF transactions. The predicate filter support was already in place for scans and queries, but now it has been expanded to all operations. Now it is possible to perform all types of operations based on a filtered set of records.

Independent Scans – scans now use their own thread(s) instead of sharing a thread pool, and are given a configurable ‘record-per-second’ limit instead of a priority. By using their own dedicated threads, scans will no longer interfere with each other and can be throttled independently.

New Background Scan/Query – a new type of background scan and query that performs write-only operations. This makes it possible to group and execute multiple write operations at once, instead of utilizing User Defined Functions.

Delete as an Operation – enable atomic read then delete. This enables delete of an entire record as an operation, eliminating the need to create User Defined Functions for such operations.

Removal of Transaction Queues – service threads will now do the job previously shared between transaction queues and service threads in prior versions of Aerospike. This has been shown to boost performance while simplifying config. As part of this change, service threads will become dynamically configurable.