Aerospike as the execution state and agent store for LangGraph

Use Aerospike with LangGraph to persist execution state, enable fast checkpointing, and manage long-lived agent memory. Learn setup, TTL handling, and O(1) data access.

LangGraph provides a default implementation for checkpointing state and persisting data, but it is not built for production-scale workloads. Aerospike’s integration introduces a persistence layer designed to deliver the predictable behavior that agentic systems require, keeping them responsive, stable, and operationally simple as they scale.

In this blog, we first show how to use the integration, and then explain how it works under the hood.

Getting started with Aerospike LangGraph

The implementation is available in our public repository and referenced in LangChain docs. It includes a Docker-based Aerospike setup, reference implementations of AerospikeSaver and AerospikeStore, and basic tests that exercise checkpoint resume, TTL behavior, and namespace-scoped store access.

To try it, start an Aerospike container, configure the saver and store in a LangGraph app, and run the included examples to observe execution recovery and agent state persistence.

Installation

Install both packages – langgraph-checkpoint-aerospike and langgraph-store-aerospike:

pip install -U langgraph-store-aerospike langgraph-checkpoint-aerospike

Running Aerospike locally

Start Aerospike using the Aerospike Docker Image:

docker run -d --name aerospike \

-p 3000-3002:3000-3002 \

container.aerospike.com/aerospike/aerospike-serverConfiguration

Both the store and checkpointer use the same Aerospike connection settings:

export AEROSPIKE_HOST=127.0.0.1

export AEROSPIKE_PORT=3000Using Aerospike checkpointing

The Aerospike checkpointer persists LangGraph execution state and enables resume from any checkpoint. Below, we connect to Aerospike, plug in the checkpointer, and run a graph execution that is automatically persisted and resumable via a thread ID.

import aerospike

from langgraph.checkpoint.aerospike import AerospikeSaver

client = aerospike.client(

{"hosts": [("127.0.0.1", 3000)]}

).connect()

saver = AerospikeSaver(

client=client,

namespace="langgraph",

)

compiled = graph.compile(checkpointer=saver)

compiled.invoke(

{"input": "hello"},

config={

"configurable": {

"thread_id": "demo-thread"

}

}

)Using the Aerospike store

The Aerospike store is used for long-lived agent data, such as user profiles, extracted entities, and cached tool outputs. Here, we initialize the store and walk through common operations, such as writing, reading, batching, searching, and deleting data within structured namespaces.

import aerospike

from langgraph.store.aerospike import AerospikeStore

from langgraph.store.base import PutOp, GetOp, SearchOp

client = aerospike.client(

{"hosts": [("127.0.0.1", 3000)]}

).connect()

store = AerospikeStore(

client=client,

namespace="langgraph",

set="store",

)

# Write data

store.put(

namespace=("users", "profiles"),

key="user_123",

value={"name": "Alice", "age": 30},

)

# Read data

item = store.get(

namespace=("users", "profiles"),

key="user_123",

)

print(item.value)

# Batch operations

results = store.batch([

PutOp(namespace=("documents",), key="doc1", value={"status": "draft"}),

GetOp(namespace=("documents",), key="doc1"),

])

# Search within a namespace

search_results = store.search(

namespace_prefix=("documents",),

filter={"status": {"$eq": "draft"}},

limit=10,

)

# Delete data

store.delete(namespace=("users", "profiles"), key="user_123")Using the integration is straightforward, but the performance and reliability benefits come from how the checkpointing layer is designed. To understand that, we need to look at how the execution state is actually stored in Aerospike.

Under the hood: Checkpointing and execution state in the Aerospike integration

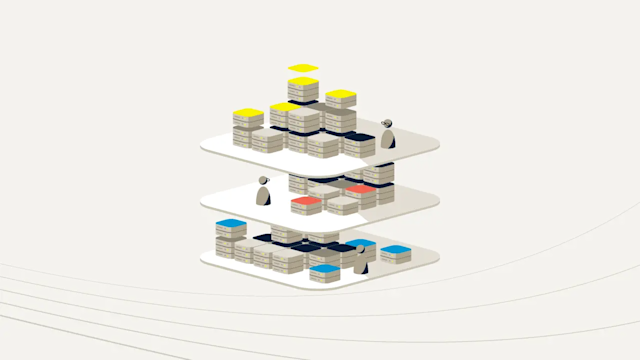

Checkpointing in LangGraph guarantees resumability, but how checkpoints are stored determines whether that guarantee holds under real production load. In Aerospike, we implement checkpointing using a three-set layout as shown in the code block below. The fundamental reason for this three-set layout is to separate different types of data (state, metadata, and history) to optimize performance, ensuring that common read/write operations remain fast and avoid read amplification.

class AerospikeSaver(BaseCheckpointSaver):

def __init__(

self,

client: aerospike.Client,

namespace: str = "test",

set_cp: str = "lg_cp",

set_writes: str = "lg_cp_w",

set_meta: str = "lg_cp_meta",

ttl: Optional[Dict[str, Any]] = None,

)The primary set (set_cp) stores the fully materialized checkpoint state keyed by (thread_id, checkpoint_namespace, checkpoint_id), enabling constant-time resume with a single read. A secondary timeline and latest checkpoint set (set_meta) stores lightweight checkpoint metadata in append-only order, allowing users to enumerate execution history without scanning full state records and also store the latest checkpoint for a given thread and namespace, making “resume from latest” an O(1) lookup. The third set (set_writes) tracks the tool calls made by the agentic system. This separation keeps hot paths fast while avoiding read amplification.

Each checkpoint record also stores its parent checkpoint ID directly in Aerospike bins, forming a durable execution lineage. Rather than reconstructing history from logs or replaying prior steps, users can resume from any checkpoint by following this parent chain. Storing lineage at the bin level allows Aerospike to update metadata independently from large state payloads, preserving write efficiency while enabling precise branching, retries, and backtracking.

bins = {

"thread_id": thread_id,

"checkpoint_ns": checkpoint_ns,

"checkpoint_id": checkpoint_id,

"p_checkpoint_id": parent_checkpoint_id,

"cp_type": cp_type,

"checkpoint": cp_bytes,

"meta_type": meta_type,

"metadata": meta_bytes,

"ts": ts,

}Checkpoints are written in an append-only, accumulative model; every new checkpoint contains the full graph state up to that point. This is a deliberate trade-off that leverages Aerospike’s high write throughput to eliminate expensive replays during resume. Reads remain predictable and constant-time, which is critical for agent systems that may need to recover mid-execution or after failure.

"ttl": {

"strategy": "delete",

"sweep_interval_minutes": 60,

"default_ttl": 43200 # in minutes

}TTL configuration further differentiates execution state from durable memory. Aerospike enforces TTL natively at the record level, allowing users to configure short-lived TTLs for checkpoints while retaining long-lived or permanent records for stores. This cleanly separates short-term execution memory from long-term agent memory without background cleanup jobs or application-level garbage collection.

Agent store model with Aerospike

LangGraph’s store abstraction is meant to be the “memory substrate” for agents: durable facts, user preferences, tool outputs, and any long-lived state you want to retrieve later. In our Aerospike integration, the store is implemented as a deterministic key-value layout that preserves LangGraph’s hierarchical namespace model while staying aligned with Aerospike’s primary-key performance.

class AerospikeStore(BaseStore):

supports_ttl: bool = True

def __init__(

self,

client : aerospike.Client,

namespace : str = "langgraph",#aerospike namespace

set : str = "store",

ttl_config: Optional[TTLConfig] = None

)At a high level, every stored item lives under a namespace path (folder-like structure), plus a user-chosen key(file). We encode that structure directly into the Aerospike primary key so retrieval stays O(1).

For example, a user might write preferences under a namespace like ("app", "users", user_id, "prefs"), while storing agent-derived entities under something like ("app", "users", user_id, "entities"). Because the namespace is part of the primary key, Aerospike lookups remain fast and predictable even as the memory grows.

def put(

self,

namespace: tuple[str, ...],#user defined namespace path

key: str,

value: dict[str, Any],

index: Literal[False] | list[str] | None = None,

*,

ttl: float | None | NotProvided = NOT_PROVIDED,

)Conceptually, the record identity is:

bins = {

"namespace": list(namespace),

"key": key,

"value": value,

"created_at": created_at,

"updated_at": now,

}Using the store is intentionally simple. Users can put an item (value + optional metadata + optional TTL), get it back by namespace and key, and list or search within a namespace scope to retrieve related memory. The important difference versus a naïve key-value table is that the namespace hierarchy becomes a first-class tool; retrieval can be scoped precisely to the memory domain you want (for example, only “prefs,” only “entities,” or only “recent tool outputs), rather than loading all data for a user and filtering in application code. This access pattern maps directly onto Aerospike’s primary-key model, making it a strong store backend for LangGraph workloads where predictable latency, clear isolation, and bounded memory access are critical.

The Aerospike integration for LangGraph delivers a durable, high-performance persistence layer for complex agent workflows. We covered the AerospikeSaver's three-set layout for constant-time resume and fast checkpointing, and the AerospikeStore's deterministic key-value layout for O(1) retrieval of long-lived agent memory. These tools enable users to confidently execute high-throughput agent systems, guaranteeing predictable latency and reliable resumability through durable execution lineage and native TTL-based state cleanup.