Customer Case Study

About MGID

MGID is a global advertising platform that helps brands and publishers succeed on the open web with performance-driven AI-powered native advertising solutions. With its suite of privacy-first technology, MGID delivers high-quality ads in brand-safe environments, reaching over 1 billion unique monthly visitors. By focusing on performance and user experience, MGID’s diverse ad formats - including native, display, and video - help advertisers achieve measurable results while enabling publishers to effectively monetize their audiences.

With a global presence spanning 18 offices, MGID’s investment in technology, talent, and strategic partnerships continues to fuel its five-year streak of double-digit year-on-year growth.

Challenge

Outgrowing a cache-centric architecture

By 2013, MGID needed to expand personalization and retargeting across multiple regions. Early experiments with Redis did not meet the requirements for geographic distribution, horizontal scalability, and production reliability.

The company required active-active operation across regions, consistent behavior across data centers, and high availability without fragile tuning.

Fan-out on every request

Each ad decision triggers multiple dependent lookups. A single interaction can require three to four reads for user profile data, cookie or IP resolution, and partner ID matching in real-time bidding flows.

In this architecture, variability compounds. A single slow lookup can affect the entire decision path. With tens of thousands of requests per second, rare latency spikes become user-visible issues.

Volatility as a baseline

Traffic shifts by region, season, and campaign. Baseline writes average around 5,000 per second, but periodic jobs and peak events can spike writes to 150,000 per second.

MGID was not optimizing for steady-state performance. It needed a data layer that behaved predictably as demand, geography, and access patterns changed.

Solution

Distributed user profile store at scale

In 2014, MGID adopted Aerospike as its real-time user profile store and decisioning backbone.

Today, MGID stores 2 to 2.5 billion user profiles in Aerospike. Most ad requests result in multiple Aerospike reads, typically completing in under one millisecond. The database layer is not a performance bottleneck and does not require continuous re-optimization.

Online feature store for ML-driven decisions

Aerospike functions as MGID’s online feature store. Impressions, clicks, and conversions are written in near real time and immediately available for subsequent interactions.

Data streams into Apache Kafka and warehouse systems for analytics and model training. Updated features and models are then deployed back into production, creating a closed loop between live traffic and machine learning (ML) pipelines.

Active-active across four regions

MGID operates equal Aerospike clusters in Europe, two locations in the United States, and Asia. Traffic is balanced via Cloudflare, enabling active load balancing and failover.

Clusters scale vertically and horizontally by adding CPU, NVMe storage, or nodes as demand grows. Hardware upgrades and node additions are routine operational actions, not architectural events.

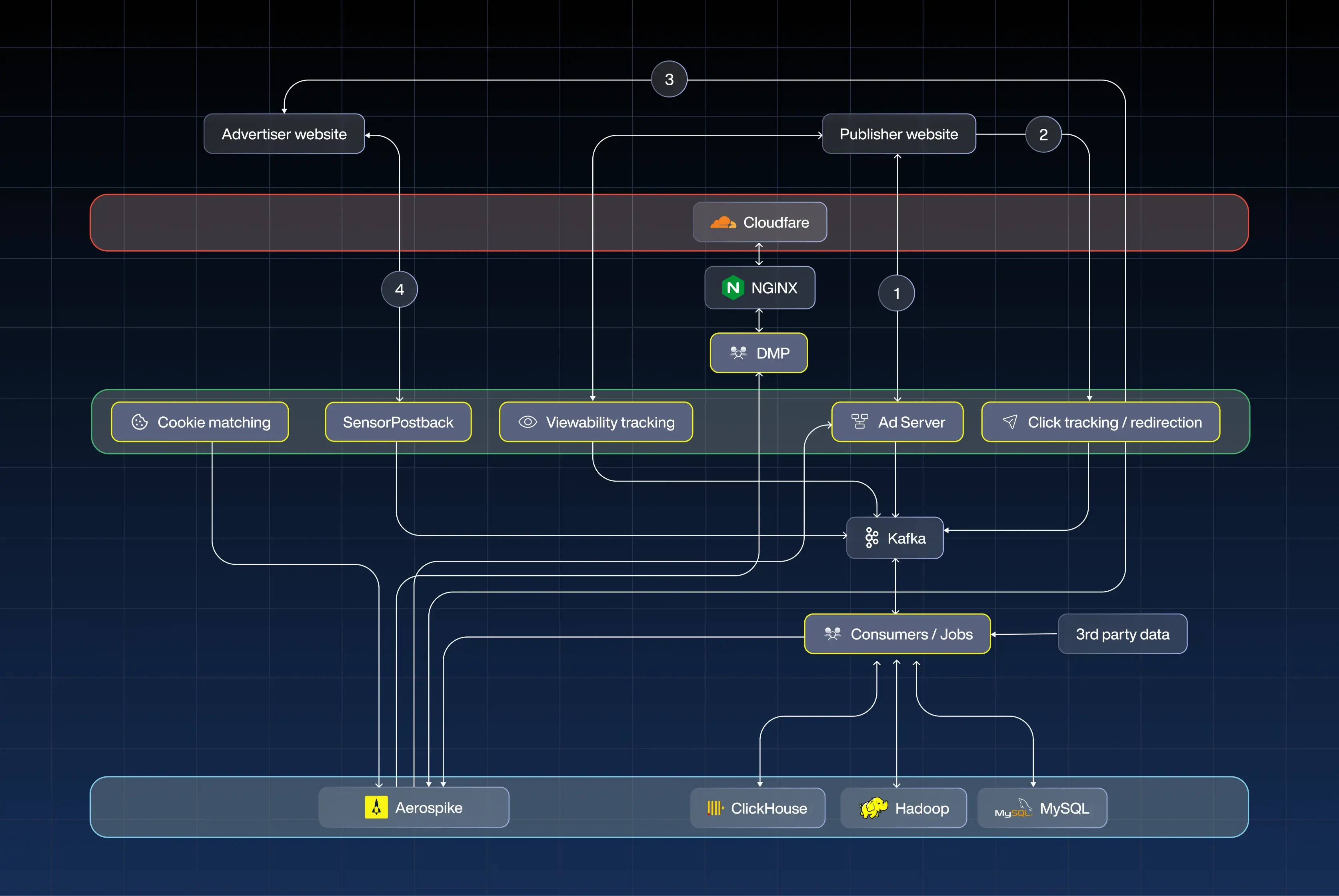

(1) In the real-time ad request path, traffic from the publisher website enters through Cloudflare and NGINX, passes through MGID’s data management platform (DMP) layer for audience enrichment, and reaches the ad server where bidding and decision logic occur. The ad server performs multiple sub-ms reads and updates in Aerospike to retrieve user profiles, cookie mappings, and contextual data before returning a response within the latency budget.

(2) If a user clicks, the request flows through the click-tracking and redirection service before the user lands on the advertiser website, with click events captured and streamed into Kafka.

(3) Impression and viewability events are recorded when ads render on publisher pages.

(4) When users complete desired actions and convert on the advertiser website, conversion postbacks are sent back to MGID and ingested through sensor/postback services.

Events from steps 2, 3, and 4 stream through Kafka and are processed by consumer jobs, which feed downstream analytics systems, such as ClickHouse and Hadoop, for large-scale processing and model training. Relevant signals are written back into Aerospike in near real time, keeping user features current for subsequent bidding decisions across regions.

Results

190,000+ reads per second with stable behavior

MGID processes approximately 100,000 ad requests per second, resulting in around 190,000 Aerospike reads per second. While baseline writes average around 5,000 per second, spikes can reach 150,000 writes per second.

Despite traffic shifts and write bursts, the real-time decision path remains stable. Performance does not degrade as load changes.

Twelve years without replatforming

Since adopting Aerospike in 2014, MGID has:

Expanded from two regions to four

Increased revenue roughly tenfold

Scaled from millions to billions of user profiles

Evolved from basic retargeting to ML-driven personalization

Through that growth, the core data architecture has remained intact.

Predictable performance under real-world conditions

MGID operates in an environment defined by fluctuating traffic, evolving models, and multi-region distribution. Aerospike delivers consistent behavior across those conditions, allowing engineering teams to focus on product innovation instead of database instability.