What distributed tracing solves in low-latency architectures

Learn how distributed tracing isolates p99 latency spikes, controls overhead with sampling, and keeps low-latency architectures stable at scale.

A latency spike in one request usually isn’t caused by one problem, but is a chain reaction across request routing, application logic, network calls, and data access. Distributed tracing helps solve this problem with evidence rather than guesswork: “Where did the time go in this specific request?” across every hop that touched it.

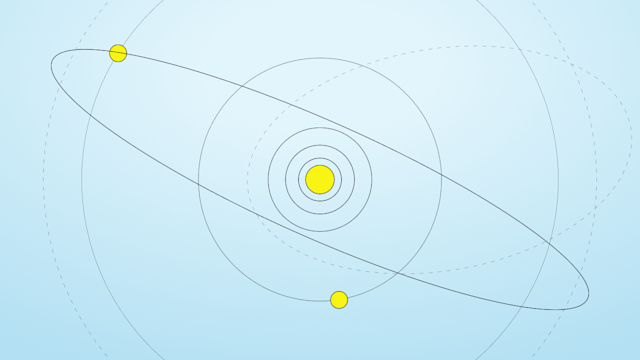

Tracing does that by turning one user request into a trace made of spans. Each span represents timed work in one component, and spans link into a causal tree so you see what happened first and what depended on it. This is the fastest way to isolate whether the problem is compute, queuing, retries, downstream dependency latency, or an unexpected fan-out.

For low-latency systems, the first problem is overhead. Tracing works in production only if its baseline cost stays below the margin your p99 latency and p999 latency budgets allow. Measured data from an at-scale tracing deployment shows what “baseline” looks like when tracing is implemented as tight library code, not as heavy per-request logging. According to Google research, root span creation and destruction averaged 204 nanoseconds, and non-root spans averaged 176 nanoseconds, while unsampled annotation work averaged about nine nanoseconds and sampled annotations about 40 nanoseconds on a 2.2 GHz x86 server. Those numbers matter because they are below the microsecond level, where added per-request work noticeably affects performance on real-time systems.

The overhead you actually notice in production usually comes from everything around those primitives, such as serialization, exports, disk or network IO, and high cardinality attributes. The practical goal is not “collect everything,” but “collect enough to make the next performance decision correctly.”

Distributed tracing is the only observability signal that shows causality across service boundaries for one request

For low-latency systems, the baseline per span cost has to stay in the hundreds of nanoseconds range to be viable

Most production pain comes from export and data volume, not from span start and end calls

How trace context propagates across services

Tracing fails when context breaks. If a downstream call does not receive the trace identifier, the trace fractures into disconnected islands, and you lose end-to-end causality.

The current enterprise default for wire propagation is the W3C Trace Context standard because it gives a common format for crossing services, languages, and vendors without custom code or middleware that converts one system’s tracing format into another, so different services can understand each other. In that specification, the traceparent field carries a version, a trace id, a parent id, and trace flags, with fixed widths of 2 hex characters for version, 32 hex characters for trace id, 16 hex characters for parent id, and 2 hex characters for trace flags. Unlike ad hoc proprietary headers, fixed widths make parsing predictable and reduce edge case behavior under load.

That also lets you reason about the minimum propagation payload. The W3C parsing guidance explicitly calls out that if a higher version is detected and the header is shorter than 55 characters, implementations should not parse it and should restart the trace. If you run latency-sensitive gateways, that 55-character lower bound is a concrete interoperability constraint.

The companion field tracestate is for vendor-specific state. It is optional, and it is where propagation becomes expensive. The W3C spec sets an aggressive combined header limit of at least 512 characters and defines truncation guidance that removes whole entries and drops entries larger than 128 characters first. In other words, tracestate growth translates into real bytes on every hop, and that cost hits every downstream service even if only one team benefits from the extra context.

OpenTelemetry aligns its in-process data model with these identifiers so your code moves from API calls to wire propagation without mismatch. The OpenTelemetry trace API specifies that TraceId is a 16-byte array and SpanId is an 8-byte array. It also defines hex encodings as 32 lowercase hex characters for TraceId and 16 lowercase hex characters for SpanId.

Trace context propagation is a reliability feature

W3C Trace Context gives fixed-width identifiers and explicit size guidance for predictable performance effects

Tracestate is where propagation cost balloons, and the 512-character limit is the guardrail

Instrumentation that keeps p99 stable

The engineering choice is not whether to trace, but what kind of tracing you can afford in the critical path.

Unlike log-based correlation, distributed tracing expresses parent-child relationships across services, but only if instrumentation creates spans at the right boundaries and propagates context without gaps. Unlike metrics, traces preserve per-request detail, but that detail means traces become large and expensive to store if you allow unbounded attributes and events.

OpenTelemetry requires hard limits that prevent runaway span payloads. In the trace SDK, default limits include EventCountLimit 128, LinkCountLimit 128, AttributePerEventCountLimit 128, and AttributePerLinkCountLimit 128. These numbers define the maximum shape of one span, even when an application experiences an error storm that tries to attach too much detail.

Real systems also need domain-specific guardrails. One example is slow query tracing, where instead of tracing everything, you trace what exceeds a threshold. For example, in Aerospike Graph query tracing, the slow query threshold defaults to -1, meaning disabled until you explicitly enable it, and the sampling percentage defaults to 100% for qualifying queries. This pattern is useful beyond graph workloads because it enforces the rule: Do not spend tracing budget on the fast path, but spend it on outliers and failures.

Unlike always-on tracing, threshold-based tracing keeps your normal p99 stable because it avoids adding overhead to the request classes that already meet service level agreements. Unlike probabilistic sampling, threshold-based tracing is deterministic for cases that affect customer experience.

Keep span payload growth bounded with explicit SDK limits so one incident cannot explode your trace volume

Prefer tracing policies that target slow and failing requests, not normal traffic

Use explicit thresholds and sampling controls so tracing cost is a parameter you tune without code changes

Sampling strategies and the scaling math

Sampling is required in high-throughput systems because the underlying physics have not changed: If you log too much per request, you will pay in latency or throughput.

Measured production workload impact data shows what happens as you change sample rates. According to Google, with full sampling, or 1 divided by 1, average latency increased 16.3% and average throughput decreased 1.48%. Moving to 1 divided by 16 reduced the measured latency change to 2.12%and throughput change to -0.08%. At 1 divided by 1024, measured changes were -0.20% latency and -0.06%throughput, and the experimental errors for these measurements were 2.5%latency and 0.15% throughput. In addition, penalties associated with sampling frequencies less than 1 divided by 16 were within experimental error, and a sampling rate as low as 1 divided by 1024 still provided enough trace data for high-volume services.

That is the practical justification for head-based sampling in the SDK. Head sampling makes a decision when the trace begins. Unlike tail sampling, head sampling does not require buffering the entire trace before deciding whether to keep it, so head sampling therefore reduces memory demand in the collection pipeline.

Tail sampling is used for a different reason. Unlike head sampling, tail sampling decides based on what actually happened in the full trace, including whether an error occurred or whether latency exceeded a threshold. In OpenTelemetry Collector tail sampling, decision_wait defaults to 30 seconds, num_traces defaults to 50,000 traces in memory, and expected_new_traces_per_sec defaults to 0. Those defaults imply a real in-memory working set when traffic is high.

Sizing tail sampling buffers is straightforward arithmetic once you stop treating tracing as “free telemetry.” Your in-memory traces are roughly proportional to traces per second times decision wait. Then multiply by average bytes per trace.

One concrete anchor point for the bytes side is that, according to Google, an at-scale tracing repository measured an average of 426 bytes per span. If your typical request produces 50 spans end-to-end, one trace is roughly 21 KB of raw span payload before indexing and exporter overhead. If you run 1,000 traces per second and you buffer 30 seconds to tail sample, your collector working set is about 630 MB just for that raw span payload. The number increases linearly with span count, decision wait, and traffic.

Head sampling reduces collection pipeline cost because it does not need full trace buffering

Tail sampling captures the traces you care about, but it shifts cost into collector memory and routing requirements

Sampling rates below 1 divided by 16 keep latency penalties within measurement error in high-throughput workloads

Making database spans useful for performance decisions

Database calls are often where traces go wrong. Teams add a db span and expect answers, but capture the wrong attributes; create too many unique values in a metric or attribute dimension, making the system slow, expensive, or unstable; or fail to align trace spans with the metrics the site reliability engineering team already trusts.

The first requirement is consistent time series metrics for database latency, to see distribution changes without sampling noise. OpenTelemetry semantic conventions define a required database client operation duration metric with explicit histogram bucket boundaries of 0.001, 0.005, 0.01, 0.05, 0.1, 0.5, 1, 5, and 10 seconds. That range matters for low-latency enterprise systems because it spans the millisecond region, which typically includes p99, and extends into multi-second tail behavior that indicates retries, hot partitions, or degraded dependencies.

The second requirement is a high-fidelity view of the database itself, independent of tracing. For example, Aerospike Database provides latency histograms for in-depth transaction activity analysis. The histogram bucket thresholds follow powers of two, with a bucket threshold of two raised to the bucket number in the configured units. By default, those latency histograms are in milliseconds, configurable to microseconds. That is the server-side measurement needed to debug microbursts that do not show up in coarser client-side traces.

The third requirement is control over exported histogram resolution, so your monitoring system stays efficient. For example, the Aerospike Prometheus Exporter provides a way to configure how many latency histogram buckets to export, describing bucket thresholds from 2 to the power of 0 through 2 to the power of 16, or 17 buckets total. It also gives an example where exporting only the first five buckets corresponds to thresholds up to 1 ms, 2 ms, 4 ms, 8 ms, and 16 ms. That bucket control is a useful model for trace attribute design as well, because if you cannot bound it, you cannot run it at scale.

For enterprises running high-performance data access, the net practical rule is simple: Treat traces as by-request evidence, and treat histograms as an accurate representation of latency distribution. Use traces to explain the outliers, and use histograms to know whether the outliers are becoming normal.

Operating distributed tracing at enterprise scale

Enterprise tracing is an operations problem before it is a tooling problem.

According to Google, one large deployment reported that production clusters generated more than 1 terabyte of sampled trace data per day and wanted trace data retained for at least two weeks. That is with sampling, not with full fidelity. This is why trace retention, storage tiering, and query patterns matter as much as instrumentation.

The same deployment also points out that when engineered correctly, tracing collection doesn’t have to demand host resources. In load testing, the tracing daemon CPU usage examples show 0.125% of one core at 25 processes with 10K per second per process, and 0.267% at 10 processes with 200K per second per process. In other words, during collection in production, the daemon never used more than 0.3% of one core. Network cost was also measured, with each span corresponding to 426 bytes on average, and trace data collection responsible for less than 0.01% of network traffic in that environment.

Those numbers are useful because they set a target. You want trace IO to be measurable and bounded, not something that competes with your data plane.

The second enterprise requirement is governance. Tracing data often includes identifiers with privacy and security implications. Even a simple configuration toggle, such as literal redaction, illustrates the operational expectation. For example, in the Aerospike Graph configuration, the redact literals option defaults to false, meaning you must explicitly turn it on if you want query literal redaction in logs. Treat that as a reminder. Privacy is a policy choice that has to be encoded.

The third requirement is that traces must be queryable fast enough to support incident response. If your trace store takes minutes to return results during an outage, engineers rely on dashboards and manual log searches. The only way to avoid that is to design trace indexing and retention policies around incident workflows rather than around raw ingest success.

Aerospike and distributed tracing

If you run low-latency systems, distributed tracing lets you figure out what causes latency spikes. It gives you the request path, the downstream calls, and the span timing that explains what caused p99 to increase. The tradeoff is that tracing must be engineered like part of the production data plane. Sampling choices, payload limits, and retention policies decide whether tracing helps or becomes a problem itself.

Aerospike is built for predictable low-latency data access, and it makes latency histograms available that can be configured down to microseconds for detailed performance analysis. If you want distributed tracing that produces answers to act on, pair end-to-end traces with accurate latency data from the server and use that combination to guide how you make decisions about your data layer and your application architecture.