After scalability and availability: Why predictability is the new standard for modern systems

Scalability and availability are no longer enough. Learn why predictability is becoming the key to building stable, high-performance systems in the AI era.

Remember the late 1990s? The dot-com bubble happened. The internet had just become commercial, and suddenly, everything felt possible. Anyone with a domain name and a simple HTML page seemed to have a business. People registered domains like pizza.com, put up a basic website, and sometimes made serious money.

Looking back, some of it appears absurd. But the people involved were not foolish. They were trying to understand a new technology, and the rules had not yet been written. Beneath the speculation, something much more important happened during that period: we rebuilt computing.

The early internet exposed a fundamental limitation. One machine could no longer handle what online systems demanded. As the number of users grew, systems had to process more traffic, more functionality, and more interconnected components.

The solution was an architectural shift from monolithic systems to distributed ones, from single machines to clusters, and from vertical scaling to horizontal scaling. To build stable systems, engineers had to solve two problems: scalability and availability. If a system could grow with demand and continue functioning when machines failed, it was considered stable.

Over the following two decades, the industry became remarkably good at solving these challenges. Distributed databases, stateless services behind load balancers, replication, sharding, and consensus protocols became standard engineering tools.

Today, with enough resources and engineering competence, it is possible to build systems that scale to millions of users and survive hardware failures. But while scalability and availability remain important, they are no longer the defining challenges they once were.

The AI shift: When one request becomes hundreds

We are now living through another moment that feels strikingly similar to the early internet era. Artificial intelligence has become the new frontier. There is intense experimentation, enormous investment, and entire companies being built on APIs that did not exist a few years ago.

Just as in the late 1990s, some of today’s ideas will probably look naïve in hindsight. But beneath the hype, something structural is changing again. For two decades, building stable systems meant solving two problems: scalability and availability. The AI era introduces a third: predictability.

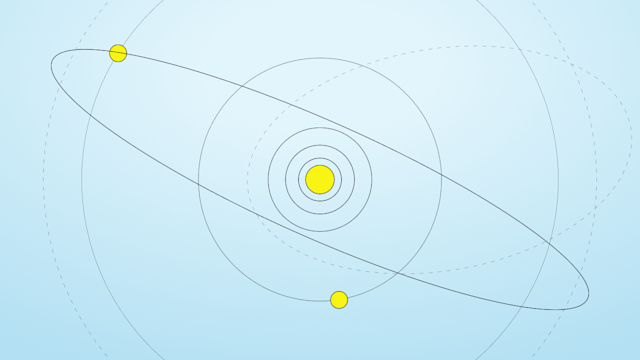

During the early internet era, system load scaled roughly with the number of users and the actions they performed. More users meant more requests. That created a scale problem, which distributed systems were designed to solve.

With AI systems, that mapping has changed. A single human interaction no longer corresponds to a handful of requests to underlying systems. Instead, it triggers a cascade of internal operations: model invocations, retrieval calls, database lookups, downstream service requests, and tool executions.

In more advanced architectures, agents may perform recursive reasoning steps, generating additional queries as they refine an answer. What begins as one prompt expands into a large and dynamic chain of internal operations. A single user request can generate hundreds, sometimes thousands, of internal operations. The relationship between human activity and infrastructure load is shifting from one-to-a-few to one-to-many, often expanding exponentially.

This growth increases demand for systems that run at far larger scales, which the industry largely learned to handle during the internet era.

However, the explosion of internal calls also makes variance in response times more likely. As systems fan out into many dependent operations, even a little variability quickly shows up to users. The tolerance for rare slow events, therefore, collapses dramatically.

This is why the central challenge of building stable systems is shifting. Scalability and availability remain necessary, but they are no longer sufficient. The emerging challenge is predictability.

When rare events become normal

To understand why statistically insignificant events will start to matter more, consider a simple example. Imagine a database lookup that is fast 99% of the time and slower 1% of the time. A 1% slowdown sounds rare.

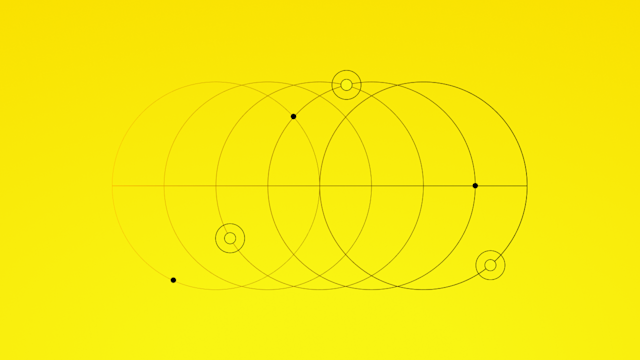

But now imagine that one user request depends on 100 independent database lookups. The probability that at least one of those calls hits the 1% slow path isn’t 1%. It’s 63%. A rare event at the component level becomes typical at the application boundary.

The situation becomes even more interesting when we look deeper into the tail. Not all slow responses are equally slow. Within that 1%, the slowest 0.1% are much worse than the rest. When a request fans out across many operations, these extremely rare events show up surprisingly often.

If a request triggers 100 internal calls, there is roughly a 10% chance that at least one of them will fall into the slowest 0.1% of responses. So, an event that occurs 0.1% at the database layer will happen in roughly 10% of user interactions. It’s even worse in systems with deeper fan-out.

In other words, for 99% of user requests to meet a service-level objective (SLO), it is not enough for a database to be fast 99% of the time. Plus, even its 0.01% events must still fall within the SLO’s latency budget. If there’s no ceiling on the extreme tail, the system can’t be considered reliable.

Optimization becomes increasingly ineffective

When systems feel slow or unresponsive, the first response is optimization. Engineers add caching layers, adjust timeouts, provision more hardware, or tune configuration parameters. These interventions improve average performance, but they rarely address the underlying issue.

Optimization works by dedicating more resources to a few operations, based on the assumption that those operations occur frequently. In other words, optimization relies on identifying a steady state in system behavior and allocating resources accordingly.

For optimization to work, that steady state must remain reasonably stable and predictable. But in the AI era, steady state begins to erode.

AI agents can dynamically construct execution paths from one request to the next. The pattern of queries may change without warning. New and old content may be equally relevant to the agent’s reasoning process. One interaction may require access to a large and diverse portion of the dataset.

As workloads become less predictable, optimization strategies that depend on stable access patterns become increasingly ineffective. These challenges are not the result of poor engineering but from the different demands that AI-based workloads place on infrastructure.

Architectures designed around steady-state optimization struggle in environments where behavior constantly shifts. In such systems, stability cannot rely on optimization alone. It must be designed into the behavior of the underlying platform itself.

Predictability as the new metric for stability

Designing for predictability requires a different thought process. Instead of treating variance as an acceptable side effect, predictable systems treat variance as a defect. Design shifts from asking how fast a system is when everything goes right, to asking how bad it can be when conditions change.

This leads to different architectural decisions. Systems designed for predictability avoid tight coupling to cache hit rates and do not depend on favorable workload patterns. They are built to run consistently even when data access is less predictable, datasets grow, or access patterns change.

The need for predictable behavior is not new. Certain classes of systems, such as recommendation engines, fraud detection platforms, and real-time bidding systems, have required it for many years. In those environments, one user interaction can depend on hundreds or even thousands of data lookups. Engineers working in those domains have long understood that tightly bounded latency distributions are critical to maintaining application responsiveness.

What is changing now is that workloads involving hundreds of internal operations are becoming more common. While the dot-com era taught the industry how to scale systems to handle many users, AI is teaching us how to build systems that remain stable under volatility. When rare component-level events routinely show up to users, system design needs predictability.

How Aerospike improves predictability

A few systems were designed around this situation before AI made it mainstream.

One example is Aerospike. From the beginning, its architecture focused on deterministic behavior under changing conditions. The goal was to keep latency distributions tightly bounded even when workload patterns shift, cache hit rates drop, or datasets don’t fit in memory.

The system also has to be fast enough. Solving both speed and predictability required a different approach to storage.

Aerospike introduced a disk access model that eliminates disk as the dominant performance bottleneck. The result is a system capable of maintaining tightly bounded tail latency across datasets even when they’re much larger than available memory, while still delivering performance comparable to, or faster than, database architectures that rely on integrated caching layers.

In a recent benchmark comparing Aerospike and ScyllaDB, we evaluated not only peak throughput and average latency but also latency stability, high-percentile jitter, and behavior under low-locality workloads. Results show that Aerospike not only delivers higher throughput, but also maintains stable latency as workload locality declines. In contrast, ScyllaDB’s latency becomes increasingly sensitive to workload locality.

The difference becomes particularly visible at extreme percentiles such as P99.9. Aerospike continues to deliver fast responses with tightly bounded variance, while ScyllaDB exhibits wider latency deviations in workload outliers.