Understanding service availability in distributed systems

Learn what service availability means, why uptime is critical for enterprises, and how high availability architecture ensures resilient, always-on systems.

Service availability is the proportion of time a service remains accessible and functional. In practical terms, it’s measured as a percentage of uptime over a defined period. Absolute 100% uptime is virtually impossible, so availability targets are often expressed in terms of “nines.” A service level agreement (SLA) might guarantee, say, 99.99% availability, meaning no more than roughly 52 minutes of downtime in a year. “Five-nines” availability (99.999%) means essentially continuous operation, equivalent to only 5.26 minutes of downtime per year. High service availability implies not just that the servers are running, but that the application runs as expected without interruption.

Maintaining such availability includes both unplanned outages, such as hardware failures or crashes, and scheduled maintenance. A highly available system is engineered to handle routine maintenance or minor component failures with little or no effect on users. In that case, backup components or nodes take over if a primary component fails, so the service stays online. This “always-on” design distinguishes high-availability architectures because they aim to keep services running continuously, despite the small failures that occur in any system. Service availability, therefore, is a reliability metric indicating how resilient and well-engineered a service is for continuous operation.

Why service availability is important for enterprises

For enterprises, especially those providing real-time digital services, high availability is a necessity. Downtime has direct and significant business consequences. Studies have quantified the cost of outages and found staggering figures: one 2024 analysis by Oxford Economics estimates an average cost of $9,000 per minute of service downtime, or $540,000 per hour. This includes lost revenue from transactions that can’t happen, productivity losses, and the immediate costs of recovery.

Certain industries face even higher stakes. In financial services, for example, unplanned downtime racks up hundreds of millions of dollars in losses annually. Beyond direct financial impact, an outage undermines customer trust and drives users to competitors, damage that lingers longer than the outage itself. Enterprises often must spend on public relations and customer retention after major unplanned downtime events and sullied customer experience, reflecting how much a company’s reputation and brand loyalty are tied to keeping services available.

High availability is also important because many applications are integrated into daily operations, and user expectations are high. Whether it’s an e-commerce platform, an online banking system, or a real-time analytics service, users worldwide expect access to these services at any time, without delay. If a service is down or unresponsive even briefly, customers may abandon transactions or lose confidence. In some cases, such as emergency response systems or high-frequency trading platforms, even a few seconds of downtime is unacceptable.

Because of this, enterprises define strict, agreed service time for uptime and often engineer for “four-nines” (99.99%) or better availability on critical systems. Every additional decimal of availability reduces allowable downtime per year, but also greatly increases the level of engineering effort and investment required. Simply put, consistent service availability is important for business continuity, revenue protection, and customer satisfaction for enterprises running high-performance, always-on services.

Section summary – Why availability matters:

Downtime affects revenue and productivity

Outages damage customer trust and brand reputation

Some industries cannot tolerate even brief interruptions

Higher SLA targets reduce allowable downtime but cost more

Designing systems for high service availability

High service availability requires architectural design and operational practices. Systems must be built to eliminate single points of failure and to continue operating even when individual components fail or go offline. In practice, this means incorporating several principles and mechanisms into the system’s design.

Redundancy and data replication

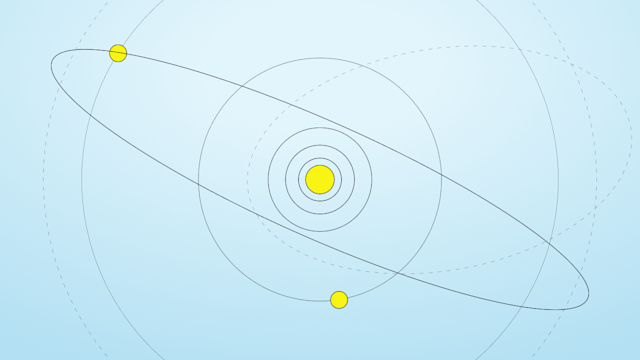

At the heart of high availability architecture is redundancy. Important components such as servers, databases, and network links are provisioned in multiples, so if one instance fails, another takes over. This applies at all levels: Multiple servers run the same application, multiple database nodes store the same data, and even power supplies or network connections are duplicated.

Data replication is especially crucial: The system keeps copies of data on different nodes or locations so no single storage failure causes data loss or downtime. In a clustered database, for example, each piece of data is stored on at least two nodes. That way, if one node goes down, a replica on another node is available to serve requests. Redundancy and replication mean the service survives individual component failures without interrupting overall service. They also form the basis of failover mechanisms.

Failover and fault tolerance

Failover means the system switches to a backup component when a primary component fails. High-availability clusters are configured so that a standby server or service instance assumes the responsibilities of a failed node. This transition might happen in seconds or less, often so quickly that user satisfaction doesn't take a big hit, as most never notice anything beyond perhaps a brief delay.

Fault tolerance goes even further; the system keeps running without interruption even if multiple failures occur. True fault-tolerant systems, such as dual-redundant hardware running in parallel, aim for zero downtime, though they come at high cost and complexity. Most enterprise systems strive for high availability with minimal downtime rather than absolute fault tolerance, balancing practicality with resilience.

The general practice is to use automated health checks and cluster management to detect failures immediately and distribute the workload to healthy nodes. It’s also important to distribute redundant components across independent failure domains, such as locating backup servers in a different rack, power circuit, or data center, so one event, such as a power outage or network failure, doesn’t knock out both primary and backup. Well-designed failover means a failure triggers a routine swap-over rather than a crisis, keeping the service running.

Monitoring and self-healing

High availability isn’t one-and-done, but an ongoing commitment. Monitoring system health continuously is vital. Enterprises use monitoring tools that track server status, response times, error rates, and other indicators of trouble. The goal is to catch incidents early, or even before they fully develop, and react quickly. Self-healing mechanisms automate parts of this response.

For example, if a web server process hangs, an orchestrator might restart it; if a node becomes unresponsive, the cluster manager removes it and redistributes its workload to other nodes. Automated failover, as mentioned, is a form of self-healing.

Another example is auto-scaling: If traffic surges to a level that risks overloading servers, which could lead to outages, an auto-scaling system launches additional server instances to handle the load, then later scales down. This elasticity helps keep the system running during unpredictable demand spikes. Regular health checks, combined with automated remediation scripts or services, resolve or isolate many potential issues without human intervention. Of course, human site reliability engineers are still important for oversight, but automation reduces the window of downtime and the chance for human error during incidents.

Scalability and load management

Designing for high availability goes hand-in-hand with designing for scalability. A system might be “available” in the sense of not crashing, but if it cannot handle peak load and becomes slow or unresponsive, it’s essentially down. Therefore, high-performance, low-latency systems must manage load gracefully. Techniques such as load balancing distribute incoming requests across multiple servers so no one server gets overwhelmed. Workloads are parallelized and partitioned so that as demand grows, additional nodes share the burden without a drop in performance.

Capacity planning is important. Enterprises often provision extra capacity or use cloud elasticity, so sudden traffic spikes won’t exceed the system’s throughput. The system should degrade gracefully under stress rather than fail completely, such as by shedding non-critical load or temporarily limiting certain functions rather than going offline.

If the architecture scales horizontally by adding more servers, or vertically by using more powerful resources, organizations keep the service available even as usage fluctuates. Designs that consider performance under peak conditions help keep the service available reliably.

Geographic distribution and disaster recovery

Even with redundancy and failover within one data center, localized disasters or outages still bring a service down. That’s why high availability architectures often use geographic distribution. This may mean running the service in multiple data centers in different regions, or using multiple availability zones of a cloud provider.

If one location experiences a catastrophic failure, such as a natural disaster or major network outage, it routes traffic to another unaffected location. Data replication across regions, often asynchronous, means a recent copy of data is available on backup sites.

Designing a multi-region or multi-cloud setup is more complex, but it guards against the loss of an entire site. This approach is similar to disaster recovery (DR) planning: while high availability handles smaller-scale failures automatically, DR is about recovering from large-scale incidents. Techniques here include maintaining hot standbys, or systems in alternate locations that are already running to take over quickly, or warm/cold standbys, which might need some time to spin up.

Regular backups, replicated databases, and failover drills are part of this preparedness. In an ideal case, users are never aware that one data center went down because the service keeps running from others, possibly with reduced capacity, until normal operations are restored. By spreading risk geographically and having robust recovery procedures, enterprises keep rare events from knocking out their service.

Redundancy, failover, monitoring, scalability, and geographic distribution work together to keep the service running. A highly available system anticipates things will go wrong and handles those situations gracefully and automatically. With these design principles, services get uptime well above ordinary levels, delivering the reliability that enterprise users demand.

Section summary - Core architectural principles:

Redundancy and replication reduce single points of failure

Automated failover recovers quickly from component loss

Continuous monitoring and self-healing reduce incident impact

Scalability and load management prevent performance-based outages

Geographic distribution protects against regional disasters

Challenges and tradeoffs in high availability

Designing for maximum availability involves navigating important tradeoffs. One consideration is the cost and complexity. Pushing availability from, say, 99% to 99.99% or beyond requires much more redundancy, more sophisticated failover mechanisms, and meticulous operational discipline. Maintaining “five-nines” uptime (99.999%) demands investment in infrastructure, automation, and expertise.

Not every application justifies that level of spending, so engineering leaders must determine the appropriate availability target for each service based on its importance and the business impact of downtime. Highly available systems also tend to be complex, with many moving parts and interdependencies, which ironically introduce new ways to fail if not managed carefully.

Complexity leads to configuration mistakes or subtle bugs, so robust testing and change management are vital. Human error remains a leading cause of outages, so systems need to be as automated and foolproof as possible to reduce the chance that a mistake brings down the service.

Another challenge is the consistency versus availability tradeoff in distributed data systems. The CAP theorem states that a distributed system cannot simultaneously guarantee perfect consistency and availability in the presence of a network partition: Designers must choose to sacrifice one or the other when communication failures occur.

Many traditional relational databases choose strong consistency over availability; if they cannot be sure a transaction is up-to-date across the cluster, they would rather refuse a request, reducing availability, than return possibly stale data. Conversely, many NoSQL databases, especially early ones, favored availability, so the system always responds, even if some responses might not reflect the latest writes. This approach avoids downtime during network splits or node failures, but at the cost of potential data inconsistency that developers then have to handle.

High-performance data platforms often allow tuning this tradeoff, offering modes that prioritize consistency or availability as needed. The challenge for system architects is to provide as much consistency as the application requires without undermining the overall availability of the service. Achieving strong consistency typically involves more coordination between nodes, such as quorum acknowledgments for writes, which can affect performance and availability if not implemented carefully.

There is also the overhead of redundancy to consider. Every extra replica of data or additional failover server improves reliability, but also uses more resources and requires more synchronization. For example, maintaining three live copies of data (replication factor 3) instead of two means higher storage costs and more network traffic on every write operation, because each write must be sent to three nodes.

This redundancy tax is often worth it for the resilience gained, because a triple replication tolerates losing any one node without data loss, but some systems find efficiencies to mitigate it. In fact, many high-performance databases run effectively with a replication factor of 2, relying on fast failure detection and recovery to maintain availability with fewer copies.

Fewer replicas mean more of the cluster’s capacity is serving live traffic rather than just acting as a spare, but it also means less room for error if a node fails. So the system must be smart about quickly re-replicating data from a lost node to restore full redundancy, and possibly pausing certain operations briefly to avoid inconsistency.

The general tradeoff is between reliability and efficiency: More redundancy improves fault tolerance and availability, but at a diminishing return and greater cost in infrastructure and potential performance. Engineers must decide on an architecture that meets the availability requirements without introducing unnecessary overhead.

Finally, even the most well-designed system must contend with the unexpected. Widespread cloud outages or bugs in fundamental components challenge the assumptions of a high availability design. Have disaster recovery plans and routinely test failovers and backup restores.

High availability is an ongoing process of improvement: Teams analyze incidents and near-misses to harden the system further. Enterprise-grade service availability involves balancing acts between consistency and availability, between redundancy and efficiency, and between complexity and reliability. The result of getting it right is a resilient service that continues to run in the face of failures, but getting there is a continuous engineering endeavor, not a one-time effort.

Section summary – Key trade-offs:

Higher availability targets increase cost and system complexity

Consistency and availability must be balanced (CAP theorem)

Greater replication improves resilience but adds overhead

Automation and testing are essential to reduce human error risk

Improving service availability with Aerospike

Aerospike is a real-time data platform engineered for the production realities that threaten availability: volatile traffic, shifting access patterns, routine node failures, and the operational churn of always-on systems. For teams that cannot afford downtime or user-visible slowdowns, Aerospike keeps system behavior predictable when conditions change.

Aerospike reduces single points of failure through a shared-nothing cluster design. Every node in an Aerospike cluster is identical and capable of assuming responsibility if another node fails. Data is replicated across nodes, often at a replication factor of 2, so losing a server does not become a service outage.

When a node goes down, planned or unplanned, the cluster detects the failure, reroutes requests, and re-replicates data. Maintenance and hardware faults become routine operational events rather than emergencies.

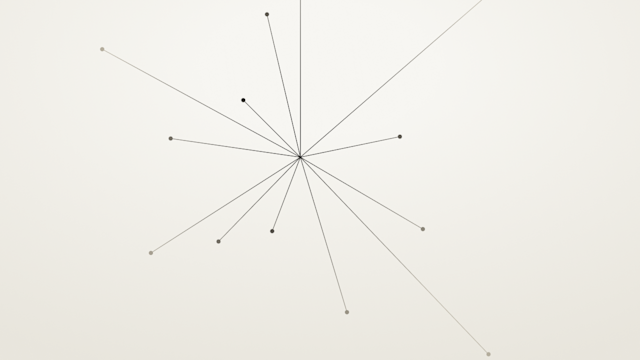

However, availability in systems is not only about a working internet service. In distributed architectures, effective downtime often appears as unpredictable latency spikes, cascading retries, and stalled user requests, particularly when one interaction fans out into dozens or hundreds of dependent operations. Under volatile demand, cache-dependent systems degrade in ways that are hard to predict, forcing teams to overprovision capacity or accept fragile performance tradeoffs.

Aerospike prevents availability degradation. It maintains predictable performance as demand grows, usage patterns evolve, and systems scale over time. Its client libraries are cluster-aware and route requests intelligently during topology changes. Its replication and failover mechanisms preserve continuity without introducing coordination overhead. And when correctness is required, Aerospike offers strong consistency options so teams protect data integrity without sacrificing operational stability.

For engineering teams evaluating how to protect user-facing systems under unpredictable conditions, the deeper question is not simply how to achieve high uptime, but how to get predictable behavior under stress. That means examining how tail latency behaves as request fan-out increases, how performance shifts when traffic spikes or cache locality changes, and how the system recovers during routine failures or scaling events. These runtime characteristics determine whether a service merely stays online or remains reliably responsive when it matters most.

Frequently asked questions about service availability

Find answers to common questions below to help you learn more and get the most out of Aerospike.

Service availability is the percentage of time a system remains operational and accessible to users over a defined period. It is typically expressed as uptime (for example, 99.99%) and measured against a service level agreement (SLA). High availability means users run and use the application without interruption, even during component failures or maintenance events.

99.99% availability (often called “four-nines”) allows for approximately 52 minutes of downtime per year. As availability percentages increase (for example, 99.999% or “five-nines”), the allowable downtime decreases significantly, often to just five minutes per year.

Service availability affects revenue, customer trust, and operational continuity. Even brief outages lead to lost transactions, productivity disruptions, reputational damage, and regulatory risk. For important systems such as financial platforms or emergency services, even seconds of downtime is unacceptable.

Designing for high availability typically includes:

- Eliminating single points of failure

- Implementing redundancy and data replication

- Automating failover mechanisms

- Monitoring system health continuously

- Scaling capacity to handle peak demand

- Distributing infrastructure across multiple failure domains or regions

These architectural practices keep systems running even when individual components fail.

Availability measures how often a system is operational and accessible, usually expressed as a percentage of uptime. Reliability refers to the system’s ability to function correctly without failure over time. A system is reliable but still unavailable during maintenance, and available but unreliable if it frequently returns errors or inconsistent data.

The CAP theorem states that in a distributed system experiencing a network partition, you must choose between consistency and availability. Systems that prioritize consistency may reject requests to avoid returning stale data, reducing availability. Systems that prioritize availability will continue responding to requests, even if some responses may not reflect the most recent writes.

Replication stores copies of data across multiple nodes. If one node fails, another replica serves requests, preventing service disruption. Higher replication factors improve fault tolerance but increase infrastructure and synchronization overhead.