Why tail latency dominates user experience in AI systems

Learn why tail latency beyond p99 drives user experience in AI systems and how fan-out, variance, and extreme latency events impact performance and stability.

For many years, system performance was discussed in terms of averages. Over time, engineers realized that averages hide important behavior, so the industry shifted toward percentile metrics such as P95 and P99 latency. These metrics are more useful because they capture the behavior of the slowest portion of requests, rather than the center of the distribution.

But with AI systems, even P99 latency is often not good enough, because even if 99% of operations are fast, the remaining 1% still cause problems. That’s because of the way these systems execute requests.

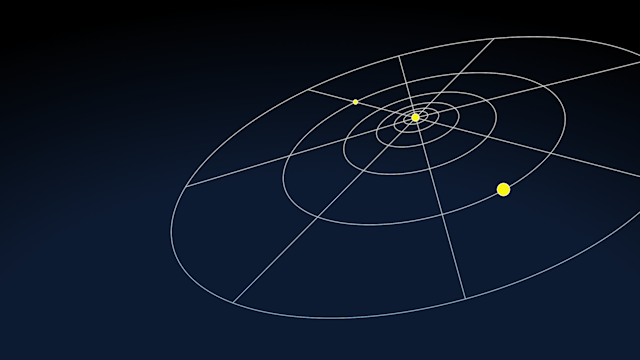

One user interaction rarely corresponds to one operation. Instead, it often triggers a chain of internal steps: model invocations, retrieval queries, database lookups, vector searches, tool calls, and downstream service requests. In more advanced systems, agents may perform multiple reasoning passes, generating additional queries as they refine an answer.

What appears to the user as one request is, internally, a distributed workflow made up of many dependent operations. In such systems, the extreme tail beyond P99 dominates the user experience.

The mathematics of fan-out

Here’s why. Imagine a database where 99% of requests complete within the target latency. That sounds good, right? Now imagine a user request that requires 100 independent database lookups.

The probability that all one hundred calls fall within the fast 99% is: 0.99^100≈36%.

This means the probability that at least one lookup exceeds the P99 threshold is roughly 63%. A latency event that occurs only 1% of the time at the component level happens more than half the time at the application level.

This effect becomes even more pronounced when requests fan out further. AI applications frequently involve hundreds of internal operations. At that scale, even events in the P99.9 or P99.99 range show up regularly.

For example, if a database has a slow response only 0.01% of the time, one in ten thousand operations, then across 100 independent calls, there is already about a 1% chance that one user request runs into it. The deeper the fan-out, the more likely it becomes that some component falls into its slow tail.

Why AI systems amplify tail latency

AI workloads make this worse for several reasons.

They tend to involve deep execution chains. One prompt may trigger retrieval pipelines, multiple model invocations, ranking steps, tool executions, and database queries.

Many of these steps depend on each other. One slow component delays the entire chain.

AI systems often behave dynamically. Agents may generate additional queries as they reason about a problem, increasing the number of internal operations unpredictably.

These workloads frequently run across large datasets and distributed services, increasing the number of potential sources of variability.

Together, these factors mean that small variations in component performance quickly compound into visible delays.

Why P99 is no longer enough

Because of fan-out effects, working to improve P99 latency alone does not guarantee a good user experience. A database might have excellent P99 numbers, yet still have big delays at P99.9 or P99.99. When requests involve hundreds of internal calls, those deeper tail events become unavoidable. In other words, the effective latency experienced by the user is shaped by the extreme tail of the distribution, not the commonly reported percentiles.

What matters is not only how fast the system is most of the time, but how tightly its behavior is bounded across the entire distribution.

Designing systems that control the tail

Once systems reach this level of fan-out, the engineering goal changes. Instead of asking, “How good is the P99 latency?” engineers must ask, “How rare and how severe are the extreme tail events?”

Systems that maintain tightly bounded latency distributions have more predictable performance in fan-out environments. Even if their average or P99 performance looks similar to competing solutions, their user-facing behavior will be more stable.

Architectures that count on favorable cache hit rates or stable access patterns often struggle here. When data access becomes more random or workload patterns shift, slow events become more frequent, worst-case events become even worse, and those rare events show up to users more often.

Predictability becomes the real performance metric

In AI systems, performance is no longer defined primarily by peak throughput or even P99 latency, but by how predictable the system remains under changing conditions.

A database that is fast most of the time but occasionally slow will create inconsistent user experiences when requests fan out across many operations. A system with tightly bounded latency, even if its headline benchmarks look similar, will produce faster and more stable applications. The difference lies in controlling tail latency.

The real lesson of AI infrastructure

The rise of AI systems is revealing something fundamental about distributed computing.

For years, engineers optimized for scalability and availability. Those problems remain important, but AI introduces a new metric: variance.

When applications depend on hundreds or thousands of internal operations, statistically rare events stop being rare and become inevitable. So the extreme tail of the latency distribution becomes the dominant force shaping user experience.