The pros and cons of multi-availability zone (multi-AZ) architecture

Learn how multi-AZ databases improve availability, fault tolerance, and performance for real-time enterprise systems. Explore architecture tradeoffs, consistency models, and operational considerations.

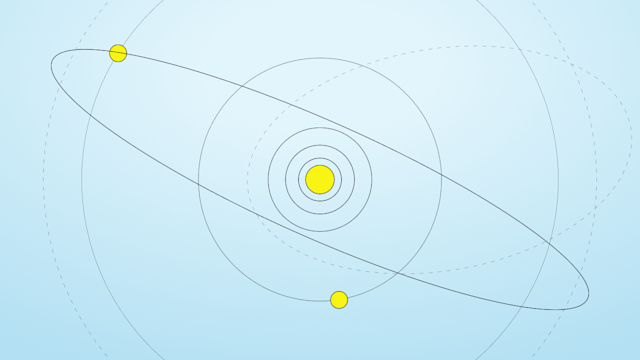

Cloud regions typically contain multiple availability zones. Each zone or data center operates independently so that a failure in one does not affect the others. Availability zones (AZs) are distinct data centers within a cloud region, each with separate power, cooling, and networking infrastructure. They are physically isolated from one another to prevent localized disasters such as power outages or floods from affecting more than one zone. At the same time, AZs in the same region are connected by high-bandwidth, low-latency networks.

This isolation-with-connectivity model lets databases span multiple AZs and tolerate data-center-level failures. However, while multi-AZ architecture is more resilient, it does not guarantee predictable performance. Latency between AZs is typically low on average, but it is not constant. Variability and tail latency still occur due to transient network congestion, coordination overhead, or recovery activity. As a result, a multi-AZ deployment does not eliminate performance jitter, even when it improves availability.

In a multi-AZ database deployment, the database instance or nodes are distributed across two or more AZs within the region to eliminate single points of failure at the data-center level. If one AZ goes down, instances in other zones continue to serve requests and make the data available. This design is more resilient for enterprise applications, but it’s not a panacea. Multi-AZ deployments protect against infrastructure outages, but not against inefficient data models, poorly tuned applications, or systemic load spikes.

Cloud providers often describe multiple availability zone setups as a best practice for workloads that require high uptime. However, redundancy across AZs comes at a cost. They require additional compute, storage, and cross-zone data transfer, all of which increase infrastructure expenses. Organizations must balance the availability benefits against higher costs.

Multi-AZ databases use the isolated-yet-connected nature of zones to be more fault-tolerant and durable. While this approach makes them more available and disaster-tolerant, it does not by itself ensure predictable tail latency, optimal cost efficiency, or simple operations. Keep these tradeoffs in mind when designing cloud data architectures.

High availability in multi-AZ deployments

High availability is the primary motivation for multi-AZ database deployments. By replicating data across zones, the database survives the loss of an AZ with little service interruption. In practice, this is through redundancy and automated failover. While this makes outages less likely, multi-AZ deployments do not eliminate all forms of disruption. Failovers, re-routing, and recovery processes still introduce brief delays or degraded performance, particularly at the tail.

There are two common patterns for multi-AZ high availability: active-passive and active-active. Active-passive designs favor simplicity, with one node serving traffic and another standing by for failover. This approach reduces coordination overhead but does not avoid the pause associated with promoting a passive system is promoted to active. Active-active designs keep all nodes serving traffic, reducing failover events, but they introduce additional coordination and consistency complexity. While active-active systems improve continuity, they do not simplify operations or guarantee stable latency under failure conditions.

Regardless of the approach, a well-designed multi-AZ database improves fault tolerance and helps meet enterprise SLAs. However, multi-AZ deployments cannot guarantee zero impact during an AZ outage. Even infrequent zone-level failures may last for hours, and while the database may remain available, performance degradation and operational strain are still possible. High availability means outages are mitigated, not that user experience is preserved.

Performance and efficiency considerations

Designing a multi-AZ database involves balancing high availability with performance. Inter-AZ networks are designed to be fast, which makes synchronous replication feasible for many workloads. However, while average latency overhead may be small, multi-AZ deployments do not guarantee predictable tail latency. Additional network hops, coordination protocols, and recovery traffic introduce variability that becomes visible near the tail.

Performance tuning is therefore essential. Serving reads locally and reducing cross-zone traffic reduces overhead, but this approach does not eliminate cross-zone dependencies. During failures or rebalancing, traffic patterns often change abruptly, and latency spikes despite otherwise careful design.

Cost efficiency is another limitation. Multi-AZ deployments use more resources than single-AZ architectures. Cross-zone data transfer, duplicate storage, and additional nodes cost more. While some systems work with fewer replicas, multi-AZ databases do not optimize for cost; how efficient they are depends on replication strategy, workload characteristics, and traffic locality.

In summary, multi-AZ databases approach single-zone performance under ideal conditions, but they do not guarantee it. The availability gains come with tradeoffs in cost and performance variability that must be actively managed.

Consistency and data replication tradeoffs

One consideration when distributing a database across AZs is data consistency. Whenever data is copied to multiple places, you need to decide how tightly to synchronize those copies. The balance is between strong consistency and high availability, especially during failures, also known as the CAP theorem. Multi-AZs require partition tolerance; if a network disruption or zone outage occurs, the system must handle it rather than crash. Given that, the system leans toward either Consistency (C) or Availability (A) when a failure happens.

Many cloud databases choose availability as a priority during normal operations, using asynchronous replication across zones. For example, Amazon DynamoDB replicates data to multiple AZs, but it doesn’t make the writer wait for all copies to be updated before responding. As soon as the primary write is done, the client gets an OK, and the data propagates to the other zone(s) in the background, usually within a second or less. This delivers high availability because the system always accepts new writes, even if one replica is temporarily unreachable, but has only eventual consistency on reads. A read might hit a replica that hasn’t gotten the latest update yet, returning stale data.

DynamoDB and similar systems have eventual read consistency as the default, but let clients request a strongly consistent read if needed. A strongly consistent read means the data is current across replicas, often by querying the leader or a quorum of nodes. The tradeoff is latency: strongly consistent operation may take longer and could fail or time out if any replica is unreachable. In other words, consistency across AZs introduces network delay or reduced availability during outages.

On the other hand, some systems favor consistency. In a CP approach of consistency and partition tolerance, the database doesn’t allow conflicting divergent writes even if a network split isolates a zone, which is called a zone partition. A common way to do this is with a majority consensus or quorum for writes and reads.

For instance, with three replicas in three AZs, a CP system might require acknowledgments from at least two before committing a transaction. This way, if one AZ goes down, the database still functions with the majority, but during a zone partition, the minority side rejects operations to prevent inconsistency. The benefit is strong consistency, so clients never see outdated data or conflicting updates, but during some failures, the database becomes unavailable for writes on the minority side.

In our 3-AZ example, losing one AZ still leaves a majority, so it will continue operating; but if the network were to suffer a zone partition with 1 AZ versus 2 AZs, the isolated single would stop accepting writes. Even worse, take a 2-AZ deployment using strict quorum, meaning the majority of 2, which is 2. If either AZ fails, the system can’t reach quorum and must halt updates until the link is restored or one zone comes back. This is the consistency tradeoff.

For many enterprise workloads, a middle ground is acceptable or even preferable. Tunable consistency is one approach; some databases allow specifying how many replicas must confirm a read or write. This can be configured per query, giving flexibility between speed and safety. Another strategy is optimistic replication, which is synchronous under normal conditions, but able to fall back to async if a replica is slow or down. In normal, non-failure situations, all writes might be nearly synchronous, and all reads up-to-date, approaching strong consistency. Only during an AZ outage or split would the system either temporarily allow some stale reads or require a reconnection before accepting new writes, depending on design.

Enterprises must decide what consistency guarantees their applications need. For instance, a banking application may need linearizable consistency, where each update is immediately visible globally and only ever applied once. Such a system would likely run in an active/passive multi-AZ mode or use a CP consensus with three zones to avoid any doubt. A real-time analytics or advertising platform, on the other hand, might tolerate eventual consistency with a slight lag in data propagation in exchange for getting the most throughput and uptime.

In all cases, multi-AZ databases are durable because they replicate data; no single point of failure should result in data loss. It’s the timing of replication that differs. With synchronous replication, durability and consistency are immediate, at the cost of more latency on each write. Asynchronous replication is durable by eventually copying to the second zone, with a small window, usually tiny, where the newest data is stored only in one AZ.

Enterprises often combine approaches: using sync replication for critical data or within a region, and perhaps async replication to a farther region for disaster recovery. Cloud infrastructure makes even strongly consistent multi-AZ operations viable, because the network is fast enough that many systems commit across zones with only a few milliseconds of overhead. But those systems also tend to be complex and need more than three replicas.

Other databases, such as DynamoDB or Cassandra, choose simplicity but give up strict consistency for routine operations. There is no one-size-fits-all answer; the consistency versus availability tradeoff must be evaluated against the business requirements and latency tolerance of each application.

Operational considerations and scaling

Running a distributed database across multiple AZs is more complex. While well-designed systems automate many failure and recovery processes, multi-AZ deployments don’t simplify operations. Managing multiple zones means more things that can fail, more things to monitor, and more capacity-planning scenarios.

Automation and self-healing reduce manual intervention, but they do not eliminate operational risk. Recovery processes such as re-replication and rebalancing use more resources and temporarily affect performance. Scaling across AZs also requires coordination to maintain balance and fault tolerance, which adds planning overhead compared with single-zone deployments.

Moreover, operators must monitor not only node health but also inter-zone behavior, replication lag, and failover dynamics. Capacity planning must assume the loss of an entire AZ, which often means running with extra hardware. While these practices make the system more resilient, they are more complex and more expensive.

Multi-AZ databases trade simplicity for resilience, and organizations must be prepared to manage that tradeoff to achieve reliable outcomes at scale.

Aerospike and multi-AZ databases

Multi AZ architecture has become an essential approach for enterprises that cannot afford downtime or data loss. By replicating data across isolated availability zones, these systems are more resilient and available without sacrificing the low-latency performance that today’s real-time applications require. This balanced approach of combining fast in-memory or near-in-memory data access with durable cross-zone redundancy is what high-performance, always-on businesses need.

It’s also the approach Aerospike’s enterprise database platform uses. Aerospike is built from the ground up to thrive in multi-AZ and multi-cloud environments, for strong consistency, sub-millisecond response times, and self-healing reliability even across distributed infrastructure. Aerospike’s clustering technology manages data across zones and multiple regions, so developers and operators get reliable uptime and speed without extra complexity.

Aerospike’s proven deployment in situations ranging from fraud detection to telecom subscriber databases shows how effective a well-designed multi-AZ database is. By using innovations such as its patented Hybrid Memory Architecture and intelligent partitioning, Aerospike offers predictably low latency and throughput at scale, even as it replicates data for safety across availability zones.

This means enterprises have the best of both worlds: the peace of mind that comes with geo-redundancy and the performance of an in-memory system.

Keep reading

Jan 28, 2026

Inside HDFC Bank’s multi-cloud, active-active architecture built for India’s next 100 million digital users

Jul 7, 2025

Migrating away from Amazon DynamoDB

Oct 9, 2024

Data replication between data centers: Log shipping vs. Cross Datacenter Replication

Sep 22, 2022

Aerospike Cross Datacenter Replication (XDR) for Real-time Mission Critical IoT Data Management