The Circuit Breaker Pattern

For the complete documentation index see: llms.txt

All documentation pages available in markdown.

This article is a deep dive into the problem that the Circuit Breaker pattern is designed to address, how it solves that problem, and how to implement the Circuit Breaker pattern in Aerospike applications.

The Circuit Breaker design pattern is used in distributed systems to provide fault tolerance and stability in architectures in which applications make remote calls, such as database operations, over a network connection. This application pattern is especially critical for maintaining stability in high throughput use cases.

As network connectivity is prone to transient errors and outages, especially in cloud environments, high-performance database applications must be designed to be resilient to these types of failure scenarios.

Aerospike’s high-performance database client libraries implement the Circuit Breaker pattern by default so that application developers using Aerospike Database don’t have to worry about implementing the pattern themselves. However, tuning the circuit breaker threshold for a given use case is critical.

While not discussed here, Aerospike provides additional configuration policies that are important for high-performance applications. See the Aerospike Connection Tuning Guide for details on the configuration policies not discussed in this post.

The problem

Failures where the application doesn’t get a response from the database within the expected time frame, such as a temporary network disruption, can result in an amplification of the load imposed on the entire system. Factors contributing to this load amplification include the increased time network connections remain open waiting on a response, the overhead of churning connections that timeout, application retry policies, and back-pressure queueing mechanisms.

When applications perform high volumes of concurrent database operations at scale, this load amplification can be significantly greater than during steady-state. In extreme cases, this type of scenario can manifest as a metastable failure where the load amplification becomes a sustaining feedback loop that results in the failed state persisting even when the original trigger of the failure state (network disruption) has been resolved.

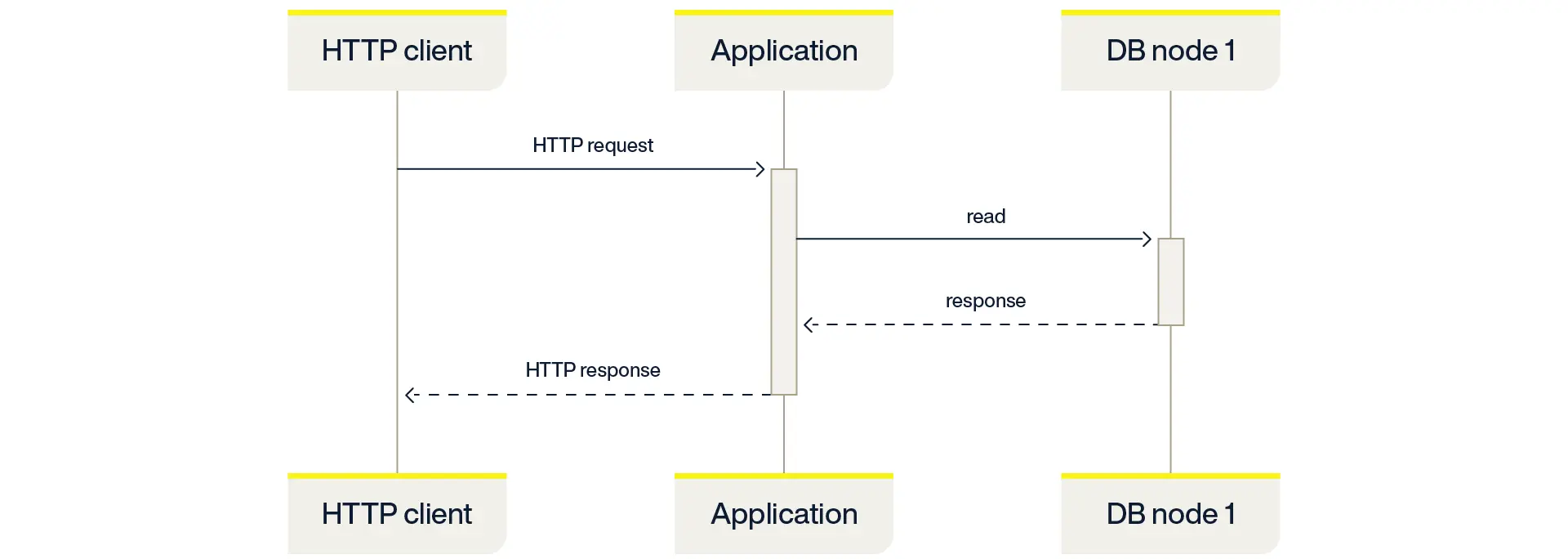

As a simple example, consider a typical HTTP-based API in which an HTTP request to the application results in a single database read as illustrated in the following sequence diagram:

This steady-state usage pattern creates a generally sustainable system load.

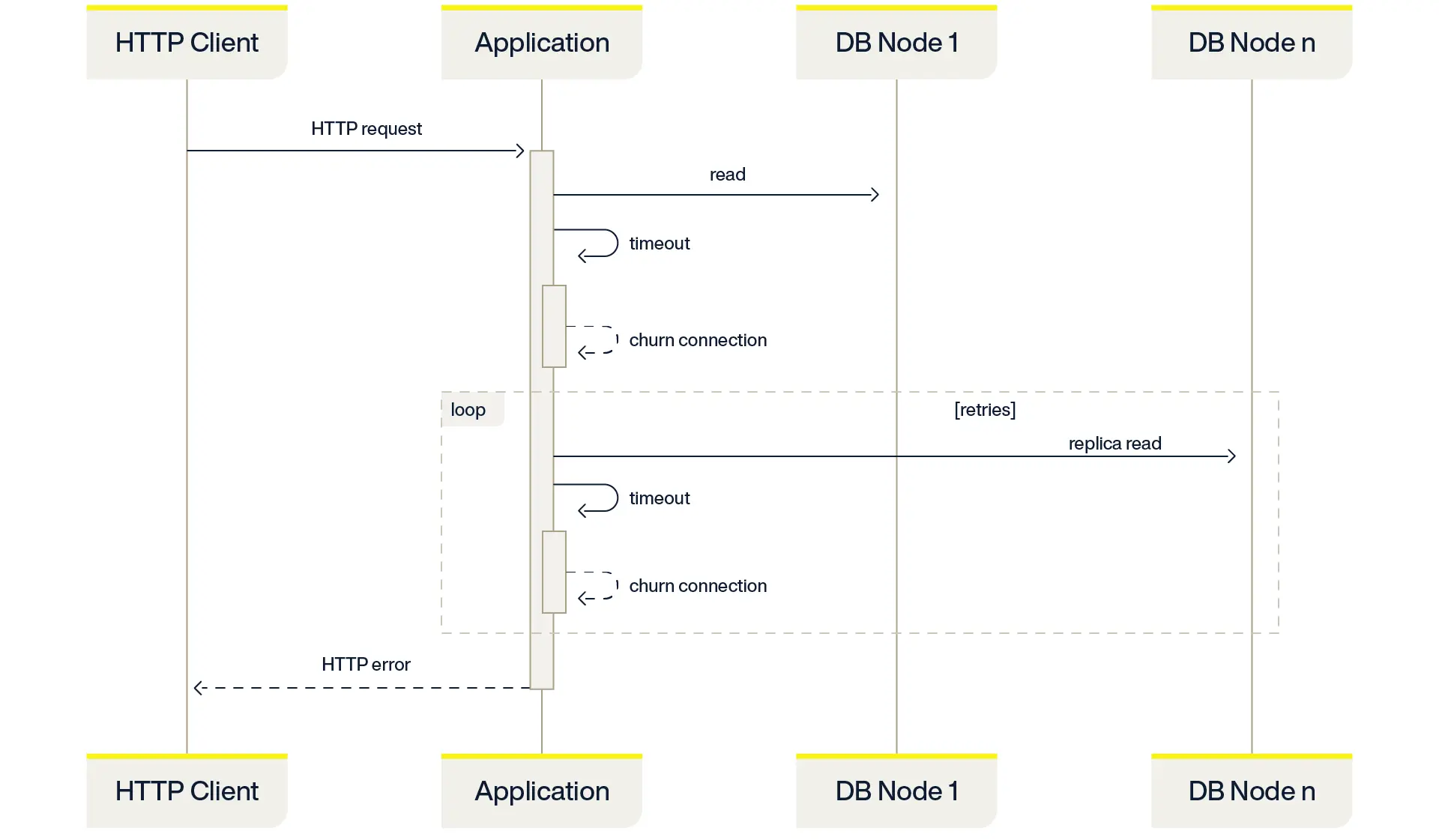

Consider what happens when a network failure results in database operations timing out. Because the application doesn’t know the nature of the network timeout, it might close the TCP connection and open a new one (connection churn). Churning connections can be expensive — especially when a TLS handshake is required. Database applications designed for high availability often retry read commands against replicas (Aerospike does this by default), which, during this type of failure, could also timeout and churn another connection per retry.

The following sequence diagram illustrates connection churn:

Each attempted transaction consumes significantly more time and resources than it would during steady-state. The result is “load amplification” triggered by the network outage.

Now imagine hundreds or thousands of concurrent application threads performing hundreds, thousands, or millions of database operations per second. The cost of churning a connection adds up at scale. A network outage lasting mere seconds can trigger a metastable failure that lasts far beyond the trigger event, and the only way to recover is to reduce the load on the system. Simply scaling the system to handle the worst-case load amplification wouldn’t be practical or cost-effective.

The circuit breaker solution

Much like its namesake component in electrical systems, the Circuit Breaker pattern will “trip the breaker” when the number of failures in a given time period exceeds a predefined threshold. The idea is to put a hard limit on the impact of these types of failures — to break the feedback loop — and recover quickly and automatically once the underlying trigger is resolved.

The Aerospike implementation of the Circuit Breaker pattern is built into the Aerospike client libraries and enabled by default. It trips when there are 100 or more errors within an approximate 1-second window. You can specify both the threshold and the time window.

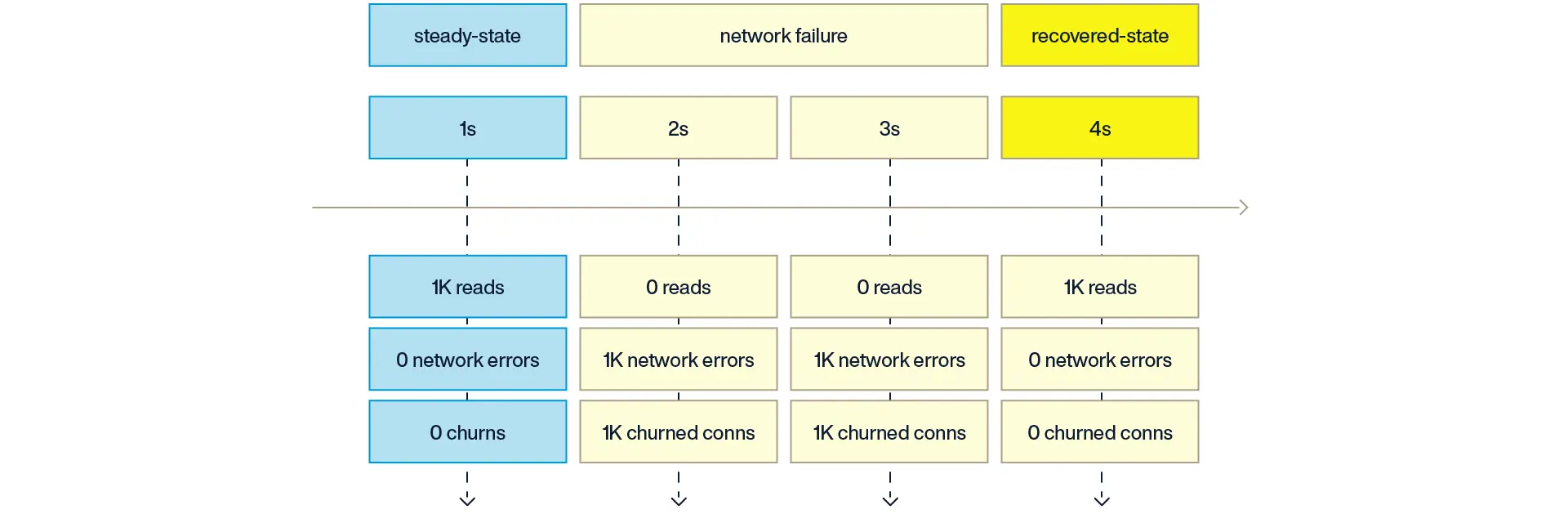

As a simplified example, assume an application is performing 1,000 database read commands per second and a network failure occurs that lasts for 2 seconds. During each of those seconds, when the network is failing, the application would get 1,000 network errors and thus would result in 1,000 churned connections.

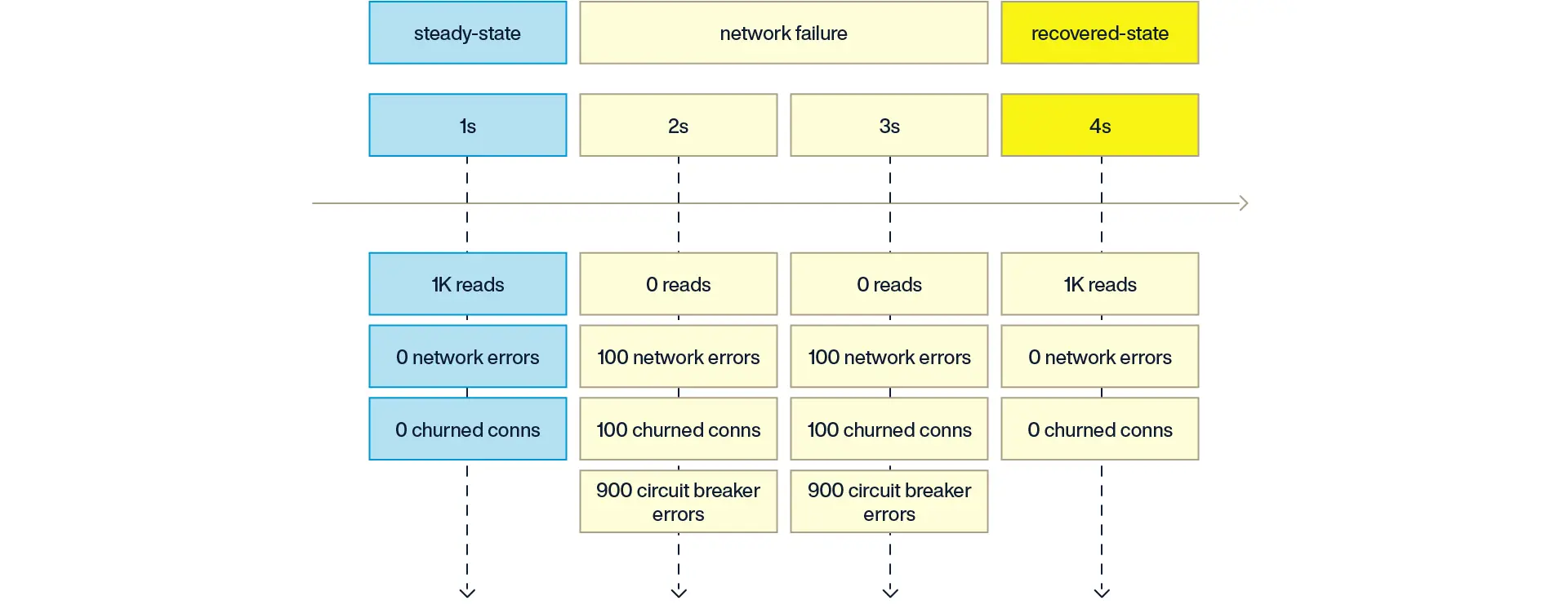

With the Circuit Breaker pattern in place, the application would only get network errors up to the configured threshold (100) each second, and the remaining 900 operations within that one-second window would immediately raise an exception rather than trying to connect to the database server. As a result, the application would only churn 100 connections each second rather than 1,000.

In other words, the impact of a network failure is limited to churning 100 connections per second, and the system only needs to be designed to handle that limited amount of load amplification.

The application would then handle the exceptions raised when the circuit breaker has tripped according to the use case. For example, the application may abandon those operations as a hard failure, implement an exponential backoff, or save those operations in a dead letter queue to be replayed later.

Tuning the Aerospike circuit breaker in Java

The Circuit Breaker pattern is enabled in the Aerospike Java client by default. However, you can tune the number of errors per tend interval (approx. 1 second) to suit your use case.

For example, to reduce the threshold from the default value of 100 errors per second to 25 errors per second:

import com.aerospike.client.IAerospikeClient;import com.aerospike.client.Host;import com.aerospike.client.policy.ClientPolicy;import com.aerospike.client.proxy.AerospikeClientFactory;

Host[] hosts = new Host[] { new Host("127.0.0.1", 3000),};

ClientPolicy policy = new ClientPolicy();policy.maxErrorRate = 25; // default=100

IAerospikeClient client = AerospikeClientFactory.getClient(policy, false, hosts);The application would then handle the exceptions raised when the circuit breaker threshold has been tripped using the MAX_ERROR_RATE attribute of the ResultCode member of the exception:

switch (e.getResultCode()) { // ... case ResultCode.MAX_ERROR_RATE: // Exceeded max number of errors per tend interval (~1s) // The operation can be queued for retry with something like // like an exponential backoff, captured in a dead letter // queue, etc. break; // ...}Example code

Aerospike provides an example Java application that demonstrates how the Circuit Breaker design pattern is implemented in Aerospike. It includes examples of error handling and instructions on simulating failures.

Tuning the circuit breaker threshold

While the default value of maxErrorRate=100 has been proven to protect against metastable failures by the Aerospike performance engineering team, the optimal value ultimately depends on the use case.

Setting the threshold effectively puts a cap on the number of connections that are churned per second (approximately) in a worst-case failure scenario. The cost of a connection churn can vary. For example, a TLS connection takes more resources to establish than a plain TCP connection. Logging a message on every failure is more expensive than incrementing a counter.

So the questions you have to ask are:

-

How many connections opened or closed per second can each of my application nodes reasonably handle?

-

How many connections opened or closed per second can each of my database nodes reasonably handle?

Every Aerospike client instance connects to every server node. So, with 50 application nodes and the default value of maxErrorRate=100, a single database node might see approximately 5,000 connections churned per second in the worst-case failure situation.

Consider what happens on the server when a connection churns. Depending on the type of failure, there might be a log message from the failure. When using the Aerospike authentication and audit logs there can be an audit log message for the attempted authentication while connecting. Those two log messages could add up to approximately 10,000 log messages per second during this type of failure situation.

Can your logging stack handle that volume from each Aerospike node?

Dropping the value down to maxErrorRate=5 would lower that worst-case log volume to approximately 250 log messages per second. The trade-off is that a larger number of the transactions during that window would have to be addressed by the application (exponential backoff, dead-letter queue).

That trade-off is acceptable if you leverage other techniques for resiliency at scale, such that the Circuit Breaker pattern is a last resort for just the most extreme failure scenarios. Less severe disruptions, such as high packet loss, single node failures, short-lived latency spikes, can be mitigated before tripping the circuit breaker in the first place.

The policies that provide additional resilience at scale are documented in the Aerospike Connection Tuning Guide. An in-depth explanation about how they work is discussed in the blog post Using Aerospike policies (correctly!).

Key takeaways

-

For large-scale and high-throughput applications, it is critical for applications to be designed for resilience to network disruptions.

-

The Circuit Breaker design pattern protects the application and the database from failures that could otherwise overwhelm the system.

-

The Aerospike client libraries implement a circuit breaker pattern and expose it to developers as a simple policy configuration.

Read the original blog post.